Recently, artificial intelligence (AI) has been front and center of newspapers, trade journals, and social media. It gleams in the limelight the same way as a host of other past innovations. Like many scientists, I look at innovations as an advancement. However, little did I know what the business outlook on innovation was until I took my economics class in my mid-career MBA studies. Ben Knight, an economist at the University of Warwick explained that innovation doesn’t make business sense until it becomes an invention and makes commercial or societal sense by making a real difference. As a pharmaceutical industry veteran, I have seen innovations come and go. Remember the “omics” debacle? Well, let’s hope the same doesn’t happen with AI.

Okay, enough said. What exactly is AI? And why are machine learning and big data often talked about whenever AI is referred to? Just google AI and you will find about 1,020,000,000 results (0.52 seconds). No kidding!

The scientific definition of AI comes from a seminal paper by John McCarthy of Stanford. He defines AI as “the science and engineering of making intelligent machines, especially intelligent computer programs. It is related to the similar task of using computers to understand human intelligence, but AI does not have to confine itself to methods that are biologically observable.”

So, let’s be honest. Most “reasonable” people will confuse AI with human intelligence or at least, some variation of it. And why is that? シンプル、The more we can replicate ourselves, the more we should be able to innovate. What better intelligence is there beyond human-directed innovation? McCarthy further states that most work in AI involves studying the problems the world presents to intelligence rather than studying people. In other words, the best synonym for understanding AI is in the development of “intelligent machines.”

Can machines be intelligent? AI researchers would say “yes” and would be quick to refer to Alan Turing’s work from the 1950s. This paper of his has been cited 19311 times (as of writing of this blog). In fact, a favorite line of mine in his paper is this “An important feature of a learning machine is that its teacher will often be very largely ignorant of quite what is going on inside, although he may still be able to some extent to predict his pupil’s behavior.”I urge you to read his insightful work to understand the early thinking on AI. In later years, concepts of machine learning and deep learning were introduced. IBM says that these are just algorithms of AI that helps create expert systems to engender predictions based on input data.

Now that we’ve identified AI as just another sophisticated data science tool to solve problems, where and how can we apply such powerful tools in new drug development?

I see one immediate application in rare disease drug development. Indeed, rare diseases— as the terminology indicates— are small patient populations that are incidence controlled per geography. One key limitation with rare populations is enrolling a much smaller and limited clinical trial population. Here are some potential opportunities where AI can help advance rare disease drug programs.

How do we detect signal from noise?

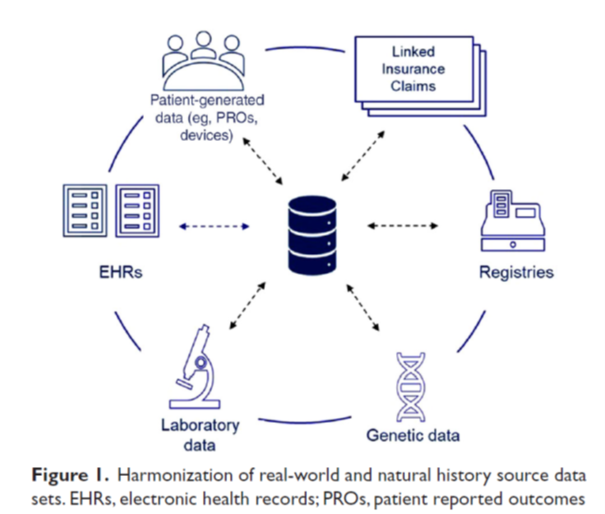

The answer lies in pooling the data across the spectrum of rare diseases. Data pooling can be done across trial populations and patient registries to account for the natural history of disease. Somewhat ironically, the rare diseases ecosystem presents a plethora of rich data and information. However, synthesizing that information and predictions is where AI can help. For a simplistic view of the richness of the ecosystem, refer to the article by Liu et al, 2022, and Figure 1 below.

How to make a correct diagnosis?

Another area where AI methods and algorithms can assist is diagnosing rare diseases because patients with rare diseases often spend years in the medical system before receiving proper diagnosis.

NORD undertook studies to quantify these delays. On average, they showed that most patients faced a delay of 6 to 7 years and more. While some rare diseases are somewhat precedented in terms of diagnosis, still others are often unidentified with the clinician having to process multiple pathology images. An unidentified or misdiagnosed rare disease is difficult to treat. Here, AI algorithms can help mine through complex and large pathology images and yield meaningful information for the clinician (see the work of Chen et al., 2022 where they find an AI algorithm to navigate a complex pathology information stream).

In summary, large and complex information streams present unique opportunities for the AI data scientist to aid in the accurate diagnosis and detecting signals in the treatment of rare diseases.

To learn more about how Certara scientists can assist in rare disease development, refer to our article in Technology Networks.

参照文献

- Chen C et al. Fast and scalable search of whole-slide images via self-supervised deep learning. Nature Biomedical Engineering, 1420-1434, 2022.

- Liu J et al. Natural History and Real-World Data in Rare Diseases: Applications, Limitations, and Future Perspectives. Journal of Clinical Pharmacology, 62(S2) S38–S55, 2022.

- McCarthy J. Artificial Intelligence, Logic and Formalizing Common Sense 15. In Richmond Thomason, editor, Philosophical Logic and Artificial Intelligence. Kluwer Academic, 1989.

- Turing AM. Computing Machinery and Intelligence. Mind 49: 433-460, 1950.